Sony Alpha camera supported by Sony SDK (Software Development Kit)

Sony Alpha camera supported by Sony SDK (Software Development Kit)

Digital Video Camera using various interfaces (USB, Gigabit Ethernet, Camera link)

Question: “Polarization” is a phenomenon that is used even in familiar scenes.To begin with, can you explain what kind of light “polarization” refers to?

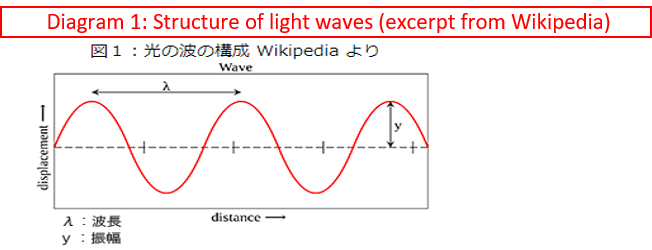

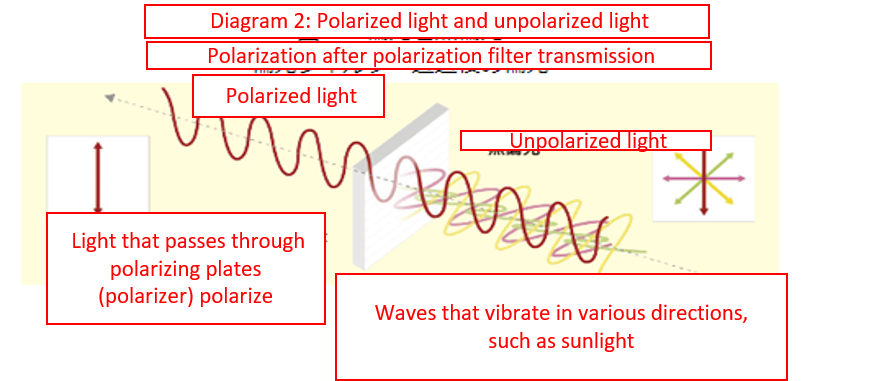

(Mr Suzuki): Let me first talk about light before we get into polarization. I’m sure many people know that light is composed of two properties: particles and waves. Focusing on the properties of waves, you can see that waves are composed of amplitude and wavelengths (Diagram 1). Our eyes can perceive light with amplitude as brightness and wavelengths as color. Also, waves have vibration directions and another element of the direction in which they vibrate. This vibration direction cannot be perceived through our eyes, but light such as sunlight is composed of vibrating waves from many directions. Light that has its vibration direction limited to one direction is called polarized light or polarization. On the other hand, light with multiple vibration directions, such as sunlight, is called unpolarized light (Diagram 2).

Diagram 1: Structure of light waves (extract from Wikipedia).

The vertical axis represents the amplitude and the horizontal axis the wavelength on the diagram. Source: Wikipedia

Diagram 2: Polarized light and unpolarized light

Question: I see. So, it’s called “polarization” since the light polarizes. However, if sunlight is unpolarized light, when does it polarize?

(Mr Suzuki): Polarization cannot be perceived by the human eye, but it is a kind of light that is all around us. It has a strange property in which unpolarized light changes to polarized light when light reflects off surfaces of glossy subjects in natural environments. For example, images that are reflected on windows are classified as polarized light. In addition, liquid crystals used for TV and PC monitors have polarization filters inside, and light that passes through these filters has its vibration direction limited and becomes polarized light. This means that light emitted from the TV is actually polarized light.

Question: Really? Is TV light polarized light? I’m even more interested now.

(Mr Suzuki): This polarization filter converts unpolarized light into polarized light. However, if polarized light is passed through, the light can be shut out by adjusting the vibration direction. This property is applied for polarized sunglasses. For example, road reflections that are bright to the naked eye can be suppressed by wearing polarized sunglasses. I think this example makes it easy to understand this phenomenon. This is because the reflected light has a specific polarization angle, and the reflected light is cut by the sunglasses mounted with a polarized sheet that shuts off the light in the direction. In this way, polarization is a phenomenon applied in familiar scenes.

Question: I see. So “polarization” is not a special light and is a light that we are unconsciously familiar with.

The “Polarization Camera” records polarization data.

Question: Next, we’d like for you to tell us about the merits of polarization cameras. Just a simple question, but I’ve heard that equipping polarization filters on digital SLR cameras applies effects that reduce reflections from glass and water surfaces, which enables users to see inside. Why did you decide to apply the polarization filter to the camera and not to use an external filter?

The polarization camera has several merits.

Before I speak about the merits of polarization cameras, I’d like to talk about polarization filters first. As I said earlier, equipping the polarization filter on a camera lens reduces reflections, including background reflections, from the subject. However, certain tricks are required to use it, and users must rotate the polarization filter and make adjustments to find an angle that eliminates reflections as much as possible. This means users must make adjustments each time the location of the sun and subject changes.

Setting the polarization filter sounds quite difficult. And hearing that it requires time and effort makes it something one would want to avoid using.

(Mr Sasaki): Yes. The problem with polarization filters is, due to their specifications, only certain angles of polarized light can be reduced, and corrections can’t be made after shooting. There’s no problem if suppressing reflections from one flat surface, such as reflections on shop windows, but it becomes tough when simultaneously reducing different reflections that face different directions, such as for reflections on windshields and side windows of cars (Diagram 3). Additionally, this is quite obvious, but images that have once been captured do not include polarized light data, and to retake the shot, users are required to shoot images by changing the polarization filter angle under the same condition.

Diagram 3: Left diagram: Reflections on side windows reduced with polarization filters. Light remains reflecting on the windshield. Right diagram: Reflections reduced with a polarization camera. Effective for both side windows and windshields.

I’ve understood that there are imitations setting-wise when shooting using polarization filters and that this is not an easy task.

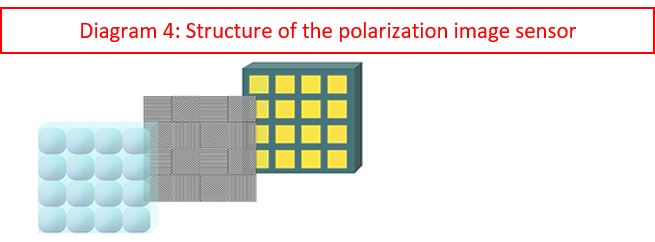

(Mr Sasaki): On the other hand, the merit of using polarization cameras is that the problems with polarization filters could be solved. The characteristic of polarization cameras is that there are polarizers that face different directions per image pixel on the image sensor

Diagram 4: Since there are four angles with polarizers set, thereby achieving a structure where four polarization filters are used simultaneously.

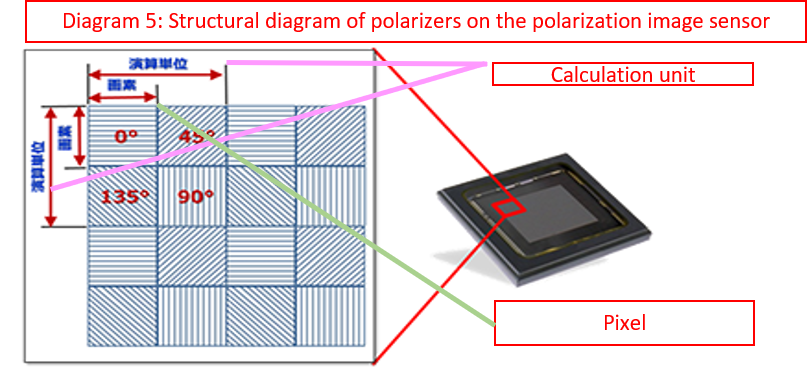

Diagram 5: Structural diagram of polarizers on the polarization image sensor.

Question: What kind of effects can we expect when 4-directional polarization filters are applied?

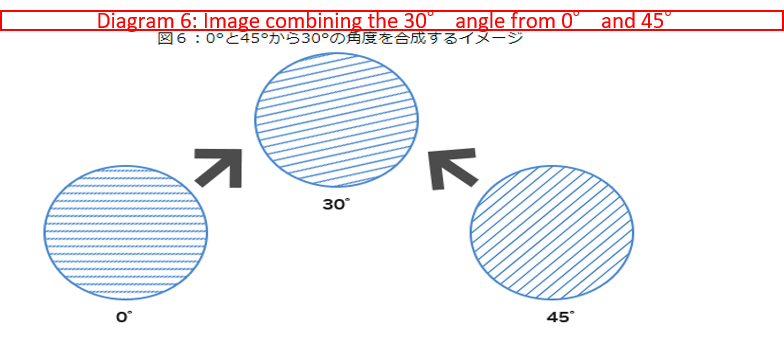

(Mr Sasaki): Since it has four directions, the angles between two points can be calculated through interpolation. For example, the condition of the light with the polarization filter set to 30° between 0° and 45° can be calculated as well

Diagram 6: Image combining the 30 degree angle and the 45 degree.

So, the rotation of polarization filters can be calculated, and users can capture images tentatively when using polarization cameras. This is because any polarization filter angle can be reproduced through calculations at a later stage.

Question: I find it interesting that tentative images can be captured instead of having to go through the trouble of finding the right settings during shooting with polarization filters.

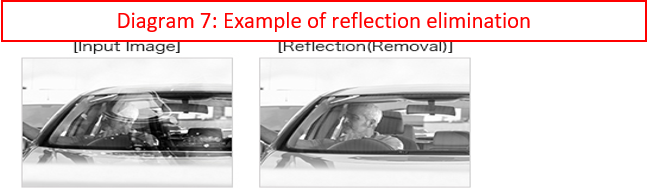

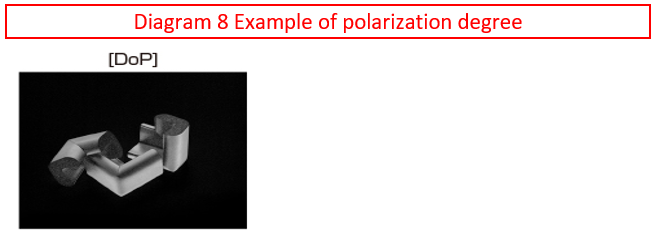

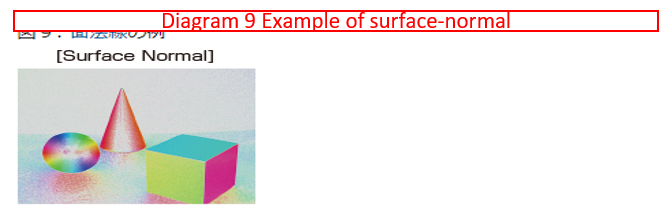

(Mr Sasaki): Yes. Moreover, this can be applied per image pixel. So, polarization cameras can suppress any reflection, regardless of the angles of reflective surfaces such as windshields and side windows. This characteristic of polarization cameras is extremely powerful, and it may be easier to understand by stating that the camera records the polarization data instead of simply capturing images. Since the polarization data is recorded, users can find images that suit their preferences by recalculating later. In addition to suppressing reflections, the ratio of polarization and unpolarized light (degree of polarization), the direction of the reflective surface of the subject (plane-normal), and pressure on transparent objects can be visualized through calculation.

Diagram 7: Example of reflection elimination.

Diagram 8: Example of polarization degree

Diagram 9: Example of surface-normal.

Since polarization data, which is the source for various image processing, can be recorded in one shot, work time can be reduced, leading to better efficiency compared to shooting using polarizing filters that require shooting each time.

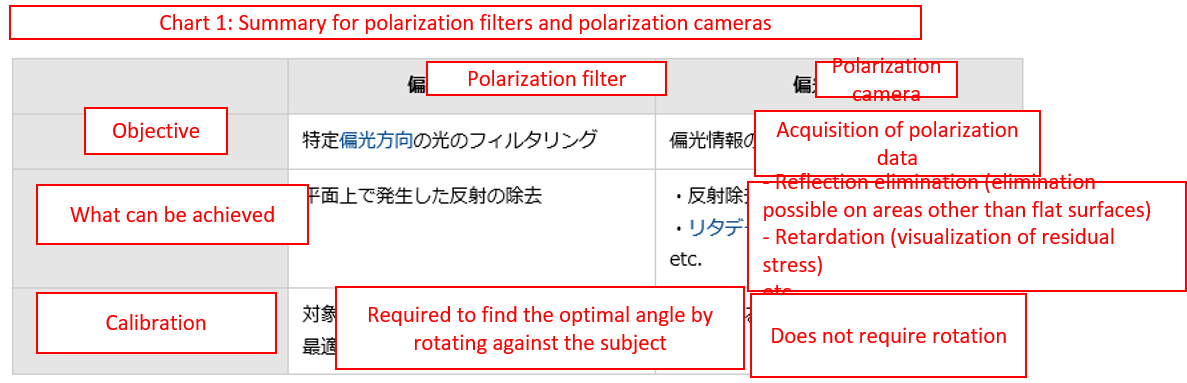

Chart 1: Summary for polarization filters and polarization cameras.

The “demosaicing” process improves the resolution.

Question: So far, we had spoken about the merits of polarization cameras. Conversely, is there anything to be careful of?

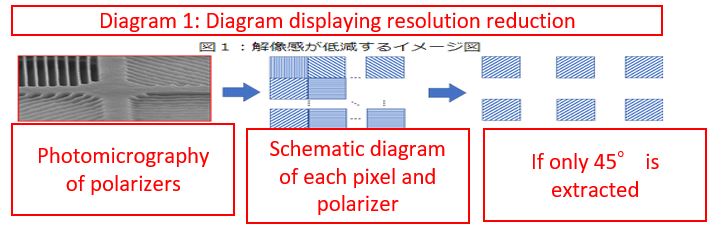

Yes. Polarization cameras have weaknesses. We spoke about the fact that the structure was created to match the polarizers with different angles per image pixel on the image sensor. The different angles indicate that the angles are constructed from four angles: 0°, 45°, 90° and 135°. I believe that the greatest weakness is due to the fact that data received from polarizers from four directions, in other words, polarization data as four image pixels which are used as one unit for calculations, makes the resolution drop to 1/4 the amount (Diagram 1).

Diagram 1: Diagram displaying resolution reduction.

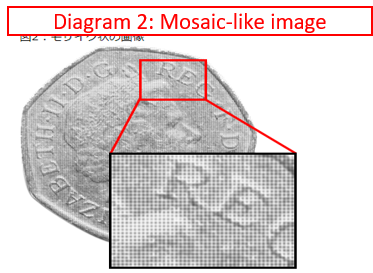

In actuality, the weakness is that a certain image pixel of the four becomes black when a subject with polarized light is applied, and the image is converted to a mosaic-like image (Diagram 2).

Diagram 2: Mosaic-like image.

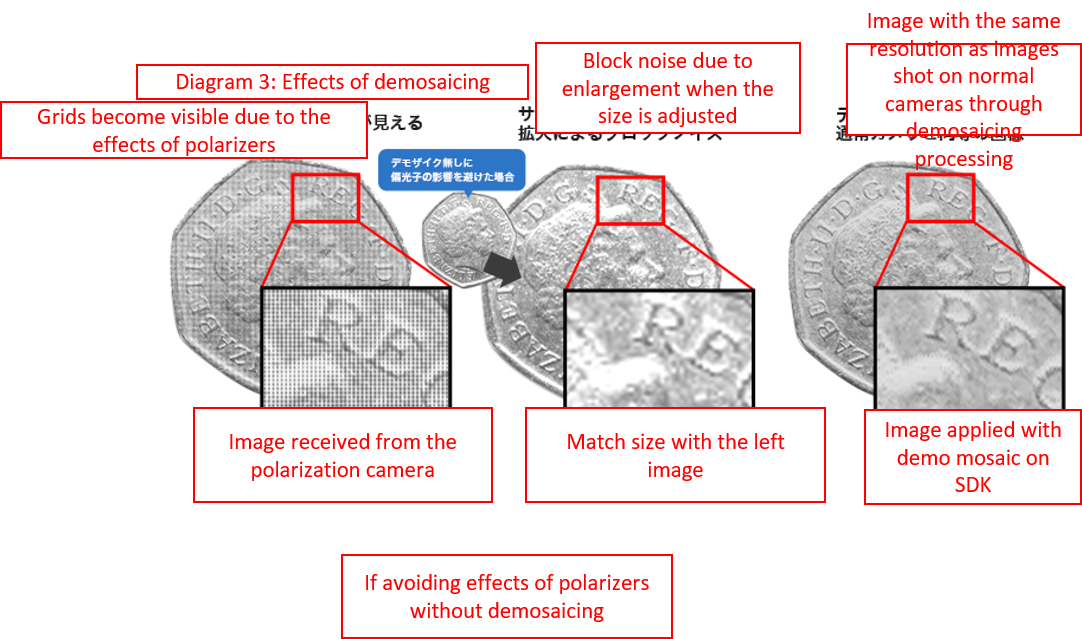

To compensate for this weakness that makes images seem like a mosaic, we’ve developed a unique demosaicing processing for polarized processing (Diagram 3).

Diagram 3: Effects of demosaicing.

Question: So demosaicing processing compensates for resolution reduction. Where is demosaicing processing achieved?

(Mr Mitsuhori): The demosaicing processing that is exclusive to polarization cameras requires a massive amount of calculations and is an extremely heavy process. However, we have solved this by adopting a structure that the camera is used only for shooting photos and polarization data processing, including demosaicing processing, is to be done later on PCs. On PCs, GPUs can be applied for calculations. Therefore, in addition to demosaicing, calculations that cannot be processed on the limited circuits of the camera can be achieved.

Question: I see. Cameras are only for photo capturing, and the processing is done on PCs. So basically, leave it to the specialist (laughs).

(Mr Mitsuhori): Additionally, this structure has other merits. The camera sends polarization data of the source it has received to the PC, therefore enabling saving as polarization data. If outputting after calculating on the camera, the polarization data of the source is lost. However, we leave the source data on the PC so it can be used again. Even when you want to change the visualization parameter, you won’t be required to re-shoot images, and the data you have can be recalculated, greatly reducing burdens.

So, in addition to demosaicing processing, our structure has made for other merits as well. Since we’ve spoken about “calculations on a PC,” can we ask about SDK? What exactly are the characteristics of SDK?

(Mr Mitsuhori): “What can’t be seen becomes visible using polarization” is a phrase that is often used when explaining the characteristics of polarization. For example, it is described as something that can eliminate reflections, visualize conditions of force applied on transparent objects, and can be easily achieved. However, mathematical and programming knowledge is required to connect what users have captured with polarization cameras and what they actually want to do. Similar to the demosaicing process we spoke about earlier, in addition to polarization, it is difficult to handle polarization data without knowledge of image processing. Even if users get a polarization camera, the product would raise questions concerning practicality if they would have to learn equations and programming for several months before confirming the effects. In order to avoid this, in addition to demosaicing processing, we have provided various processing tools for polarization in the form of SDK. Also, SDK has a viewer attached as a sample, enabling users to confirm the effects as soon as they get their cameras.

Question; Now, I understand how using SDK contributes to making application development efficient. Users can confirm the effects immediately after getting the camera, and it definitely reduces the stress of designers during development.

Question; SDK’s “reflection elimination” and “retardation” functions have a wide range of applications and contribute to minimizing tact time. So, please tell us for what kind of applications can this SDK be applied for?

The “reflection elimination” function that has high expectations for ITS applications.

(Mr Suzuki): The “reflection elimination function” is expected to be applied in various cases on ITS (Intelligent Transport Systems) applications in the future. “Speed violation detection” is an example of ITS applications that exist all over the world. Conventionally, the only required function was to “detect speed violations and record the vehicle’s license plate,” and if the “vehicle exterior” could be captured, the objective was cleared. However, in recent times, there has been a rise in traffic accidents caused by “distracted driving while using a smartphone,” and it became necessary to capture images “within the vehicle.”

Question: I see. There certainly have been incidents where drivers had caused accidents since they were inattentive due to smartphone use. Then, why are polarization cameras required to check inside of a vehicle?

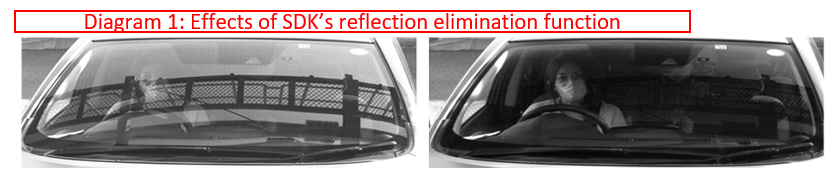

(Mr Suzuki): The biggest problem that arises here is light reflected on the front windshields of vehicles. Surrounding buildings and clouds are visible on windshields as reflected light. Therefore, it is difficult to check the inside of vehicles while evading reflections using fixed cameras. However, if the SDK “reflection elimination function” is used, suppression of reflected light off windshields becomes possible, responding to the recent needs to capture images “inside of vehicles.”

Question: By the way, I remember watching a news report announcing the establishment of the “Anti-Distracted Driving Act” in December 2019 in Japan. Demand for this function will definitely rise.

(Mr Suzuki): Yes. I agree. Other than that, though these are demands from overseas, I hear that there is a system that changes parking lot fees depending on how many people are in the vehicle. Also, to reduce traffic congestion, there are countries where there are roads that can only be used with a certain amount of people in vehicles. All these applications require in-vehicle visibility. The following are examples of reflection elimination assuming ITS application. However, in any of these cases, you can see that the vehicle interiors are more visible because of reflection elimination.

Diagram 1: Effects of SDK’s reflection elimination function

Question: Even on ships and in outer space? Applications using the “reflection elimination” function that broadens the scope of ideas in various fields. Up to this point, we’ve mainly spoken about specific examples related to ITS applications. Are there any other uses that apply reflection elimination?

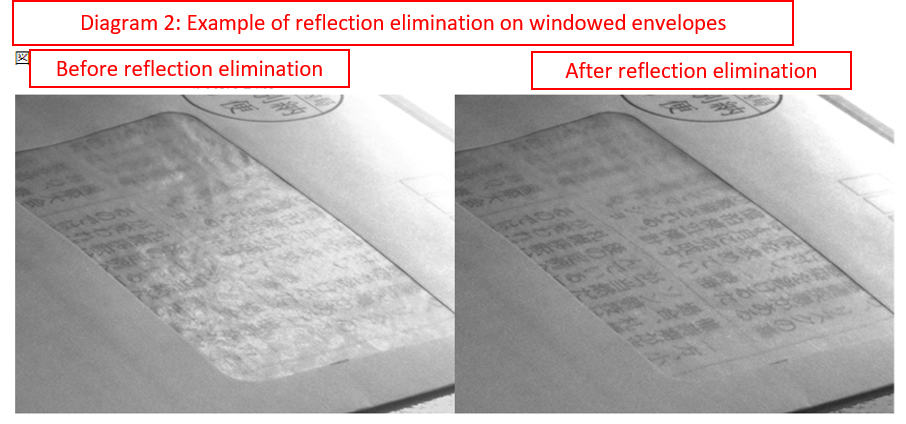

(Mr Mitsubor)i: I believe there are several. One you can easily imagine is on applications for envelopes that have the destination section covered in the film. Generally, these are called windowed envelopes, and there are needs to automate sorting processes by scanning the windowed portion using cameras. Reflections on the film are an issue for this kind of application as well.

Question: I see. Addresses on mail. I had thought that normal cameras would be able to identify the content since it is visible to the human eye.

(Mr Mitsubori): Yes. However, even if it is not a problem for human eyes, there are many cases where problems arise when scanning using machines. For example, if there are various light sources such as fluorescent lights in factories and offices, these would be the causes for reflections to occur. There seem to be various materials used for a film on windowed envelopes, and though the film may look flat, they are actually waved, causing reflections to occur. The strengths of reflection elimination on our polarization cameras are their capabilities to simultaneously eliminate reflections from multiple angles and are possibly effective for reflections off of these kinds of waved or wrinkled surfaces.

Diagram 2: Example of reflection elimination on windowed envelopes.

Question: I see. So it is quite difficult to scan information using machines, even if it is possible with the human eye.

(Mr Mitsuhori): In terms of wave reflections, bright light reflected off ocean waves strains your eyes. However, eliminating these reflections using a polarization camera would help discover drifting objects in the ocean. But, we have not been able to try this idea out, so we are unsure of its effects at the moment (laughs).

Question: Polarization cameras on ships are not something that comes to mind easily.

(Mr Mitsuhori): I’ve heard that clouds polarize as well. Therefore, I’m wondering if we can take polarization cameras to outer space and film shots with the effects of clouds eliminated to capture the surface of the earth clearly.

“Exposure adjustment” is a key factor of the “reflection elimination” function. SDK visually supports the exposure condition.

Question: So depending on the idea, it would play roles in various fields. Are there any points to be careful about when using reflection elimination?

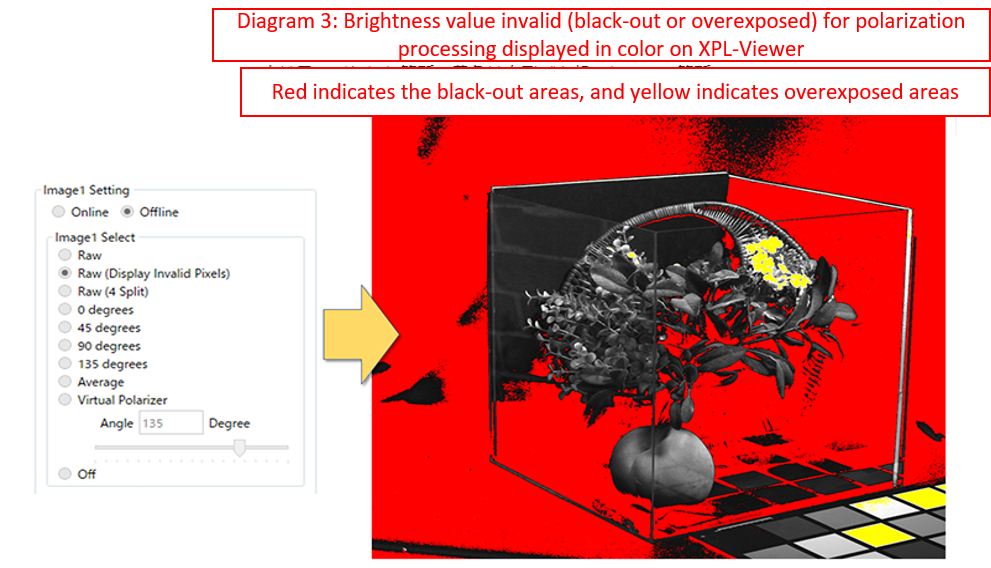

(Mr Mitsuhori): The reflection elimination process is basically effective in all scenes, but there are certain conditions that must be met. The representative condition is exposure adjustment. If exposure is not adjusted correctly, overexposure may occur on the image before polarization processing. In this case, that pixel data becomes saturated, and the data becomes meaningless. For this reason, the effects cannot be acquired even if reflection elimination processing is applied. Exposure adjustment is a basic process for imaging, but caution is required since this basic process is at times neglected when applying reflection elimination outdoors.

Question: So exposure adjustment is a crucial factor as setting conditions for reflection elimination. Was effort applied on SDK to simplify this exposure adjustment process?

(Mr Mitsuhori): Our XPL-Viewer is equipped with a function that visually verifies the exposure status when customers evaluate polarization cameras. We hope this will be applied for ITS application evaluations as well.

Diagram 3: Brightness value invalid (black-out or overexposed) for polarization processing displayed in color on XPL-Viewer.

A “reflection enhancement” function that takes advantage of the reflection phenomenon and enhances the contrast of transparent objects.

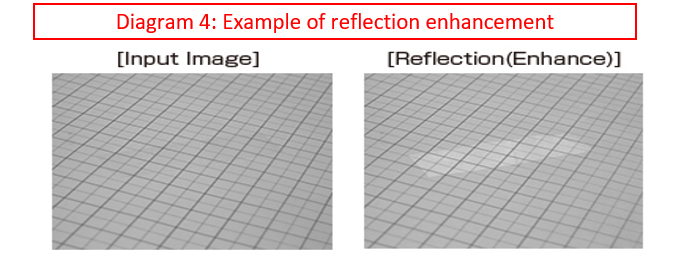

(Mr Suzuki): Up to this point, we had spoken about reflection elimination. However, if reflected components can be controlled, in contrast to reflection elimination, reflection enhancement is possible.

Question: Reflection enhancement? It’s difficult to imagine how this function could be used. Do you have any examples?

For example, in recent years, a labor shortage has become a global dilemma, and demand for object picking using robotic arms on manufacturing lines is on the rise. Object recognition using cameras is required to pick up target objects that are positioned randomly. One of the greatest issues here is object recognition for transparent objects. Is picking difficult if the objects are transparent?

(Mr Suzuki): Generally, the contrast between transparent objects and backgrounds is small, making object recognition extremely difficult. On the other hand, since the surface of transparent objects is generally glossy, enhancing the reflections that occur on the glossy surface will strengthen the contrast of transparent objects. Increasing the contrast will enable easier object recognition, even for transparent objects.

Diagram 4: Example of reflection enhancement.

Question: It’s quite interesting that you’ve chosen to use reflections the other way around that are usually unneeded.

Polarized light changes if light passes through transparent objects? A “retardation” function that makes use of these characteristics. To begin with, what is retardation?

(Mr Suzuki): Up until now, we had measured the polarized light that occurs as reflections when objects are lit by light. Retardation is a measurement method that measures the changes of polarized light that passes through transparent objects instead of object reflection.

Question: So polarized light change?

(Mr Suzuki): Yes. The inner conditions of transparent objects, especially objects that are composed of a photoelastic body, are affected by external pressure and affect light that passes by them. When polarized light passes through, the effects can be measured easily. By measuring how polarized light is changed, we can determine the inner conditions. Retardation detects this.

Question: To be honest, this is quite difficult (laughs). Aside from the principle, what is retardation used for?

(Mr Suzuki): It can be applied to check the quality of transparent products such as bottles. When these objects are manufactured, depending on the conditions, their inner structure is not uniform and may possibly be distorted due to residual stress. If the products are left residual stress, in the worst-case scenario, they may crack during transportation or after they reach consumer’s hands. To avoid this, manufacturers have applied various methods to inspect their bottles before shipping. For this, methods using retardation are the most common process that is applied.

Question: So if it is applied in quality inspection processes for bottles, are you saying retardation is already being applied?

(Mr Suzuki): Yes. Retardation is already being applied. However, the issue is that inspection takes too long if applying methods that use conventional one-directional polarization filters, greatly affecting the tact time. If SDK’s “retardation function” is applied, it would enable instant support for various changes in polarization components, making it possible to perform inspections fully online.

Question: I see. So polarization has conventionally been applied, but polarization camera and SDK would enable more efficient inspections. How is distortions visulaized by retardation function?

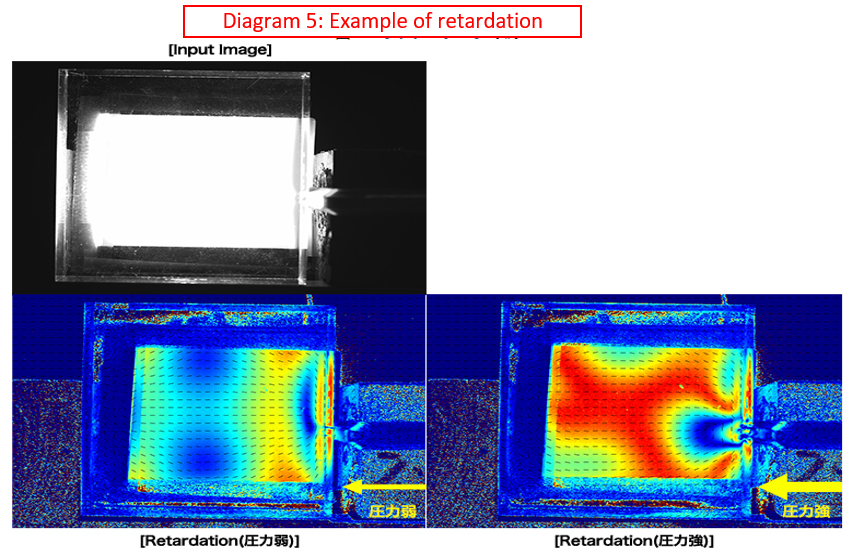

(Mr Mitsuhori): With SDK, specific values can be obtained based on the severity of the distortion. However, this is not easy for ordinary people to decipher, so we have enabled it to display the severity of distortions in colors using XPL-Viewer. Diagram 5 below shows the display result when acrylic is clamped with a vise. Since a metallic pin is placed on the right side of the vise, pressure is applied on this point as the origin. However, how much pressure is applied cannot easily be determined on the input screen. Displaying this screen on XPL-Viewer enables users to understand that pressure has been applied from the pin position based on the intensity of the vise, and distortion has occurred all over the acrylic resin.

Diagram 5: Example of retardation.

Question: I see. The state of the acrylic resin cannot be seen at all on a normal camera, but visualizing it on a polarization camera makes it obvious. Now I’m convinced that “polarization cameras visualize what cannot be seen.”